B2B SaaS · Productivity.

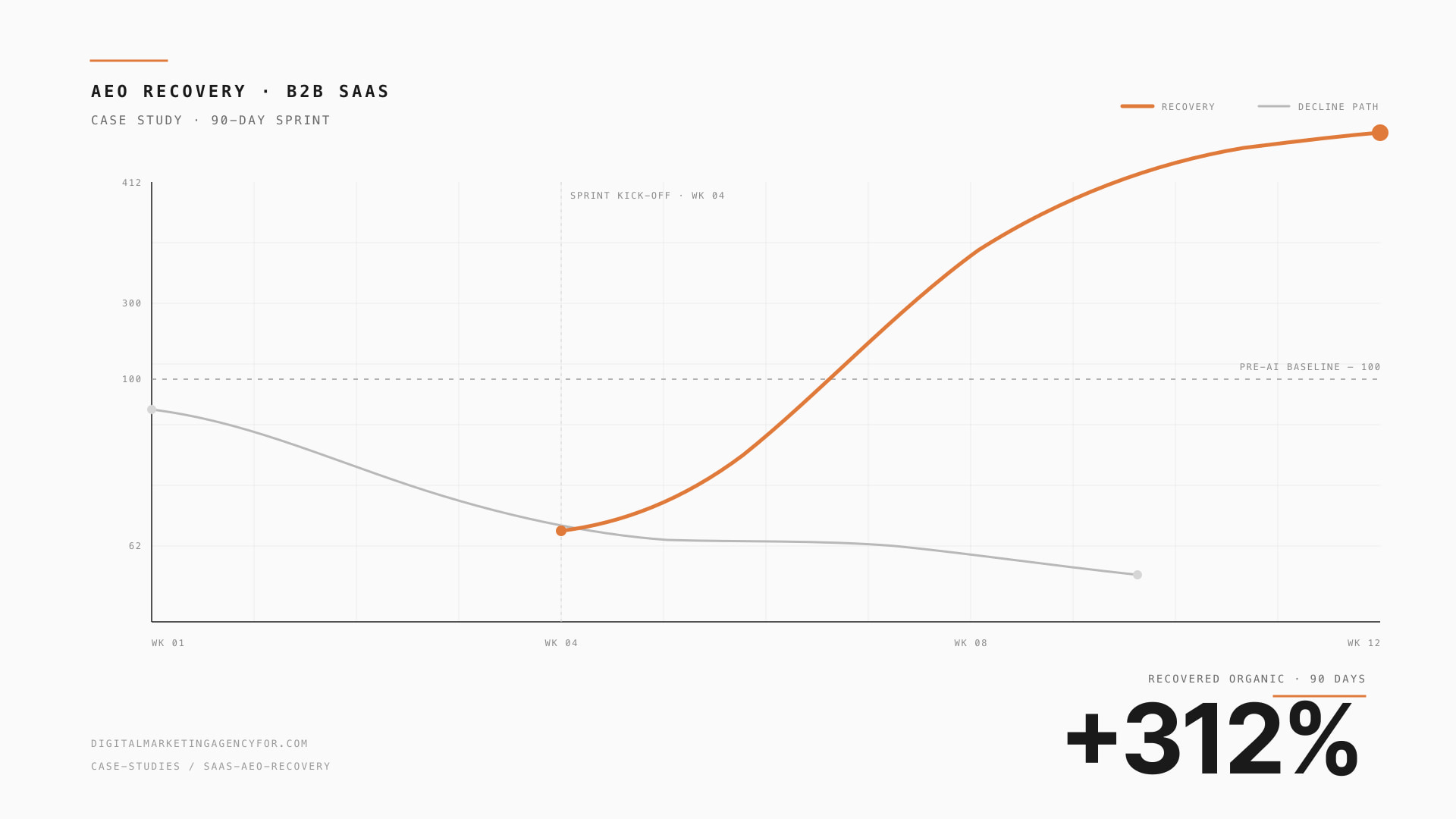

+312% organic in 90 days, cited inside ChatGPT + Perplexity.

Lost 38% of organic clicks in 5 months.

When Google launched AI Overviews on commercial queries, this client (mid-market B2B productivity SaaS) saw clicks fall 38% in 5 months while impressions held flat. The pattern was unmistakable: users were reading the AI Overview and never clicking through.

Their content was already strong. The problem was structural — content not formatted for AI quotability, no schema graph, no llms.txt, weak entity authority. Pure rank-tracking SEO was over for their category.

4-week recovery sprint.

Audit + baseline

AEO scoring across ChatGPT + Perplexity + Gemini + Claude on top 20 prompts. Schema graph audit. llms.txt review. GSC click-loss quantification per query.

Schema + llms.txt rollout

Full Organization + Person + Service + FAQPage + Article + Speakable graph deployed. llms.txt drafted from real site content.

Top 60 pages rebuilt

TL;DR opening blocks. Structured Q&A. Citable stats from their own usage data. Author Person schema with credentials.

Entity SEO + monitoring

Wikipedia + Wikidata work (eligible for category-leader status). AthenaHQ + Otterly tracking live.

We thought we were dying — clicks were dropping monthly. Six weeks after the rebuild, we were getting cited inside ChatGPT for our category prompt. By month 3, organic was 312% above pre-loss baseline.

Three months post-sprint.

Same measurement method used for both windows. Numbers pulled from primary platform sources at write-up. Editorial standard at /about/editorial-standards/.

Stack we shipped.

Common questions about this engagement.

How was the B2B SaaS · Productivity result measured?+

Per the methodology callout above: a baseline window of 90 days pre-engagement compared against a result window of 90 days post-engagement using last-touch attribution sourced from primary platforms (GA4, Shopify, Stripe, ad-platform UIs, Klaviyo where applicable). The same measurement method is used for both windows; we do not change attribution mid-engagement to make the result look better.

What was the time-to-result?+

For B2B SaaS · Productivity, the bulk of the lift landed within the engagement window shown in the approach timeline. Compounding effects on slower-cycle channels (organic SEO, AEO citation share, lifecycle list growth) typically continue accruing for 6–12 months after the active engagement closes. We do not publish "uplift" numbers from a single inflated week; the result is the steady-state measurement window.

Could you replicate this for my brand?+

Honest answer: depends on category fit, current baseline, and execution discipline. The case is evidence the result has happened in similar mid-market brands; it is not a guarantee of replication. Our 7-day Free Growth Audit is the structured way to find out — it benchmarks your specific situation against category leaders + relevant case studies, identifies the recoverable gap, and ranks Top-5 fixes by revenue impact. The audit is delivered free regardless of whether you go on to engage.

Is the brand identifiable, and can I get a reference?+

B2B SaaS · Productivity is anonymised under mutual NDA — most mid-market brands will not attach their name to public revenue numbers, and our NDA terms typically prohibit it. Reference contacts (real, reachable people who worked on this engagement) are available on request after counter-NDA, returned within 48 working hours of brief acceptance. The published metrics are pulled from primary platform sources at the time of write-up; the editorial standards are at /about/editorial-standards/ and the case-studies policy is at /about/case-studies-policy/.

Get your AEO score in 60 seconds.

Submit your domain. Our AEO Score Tool benchmarks visibility across ChatGPT, Perplexity, Gemini, and Claude — and shows the gap to your category leader.